Software & AIApril 30, 2026

Digital sovereignty: one word, four different problems

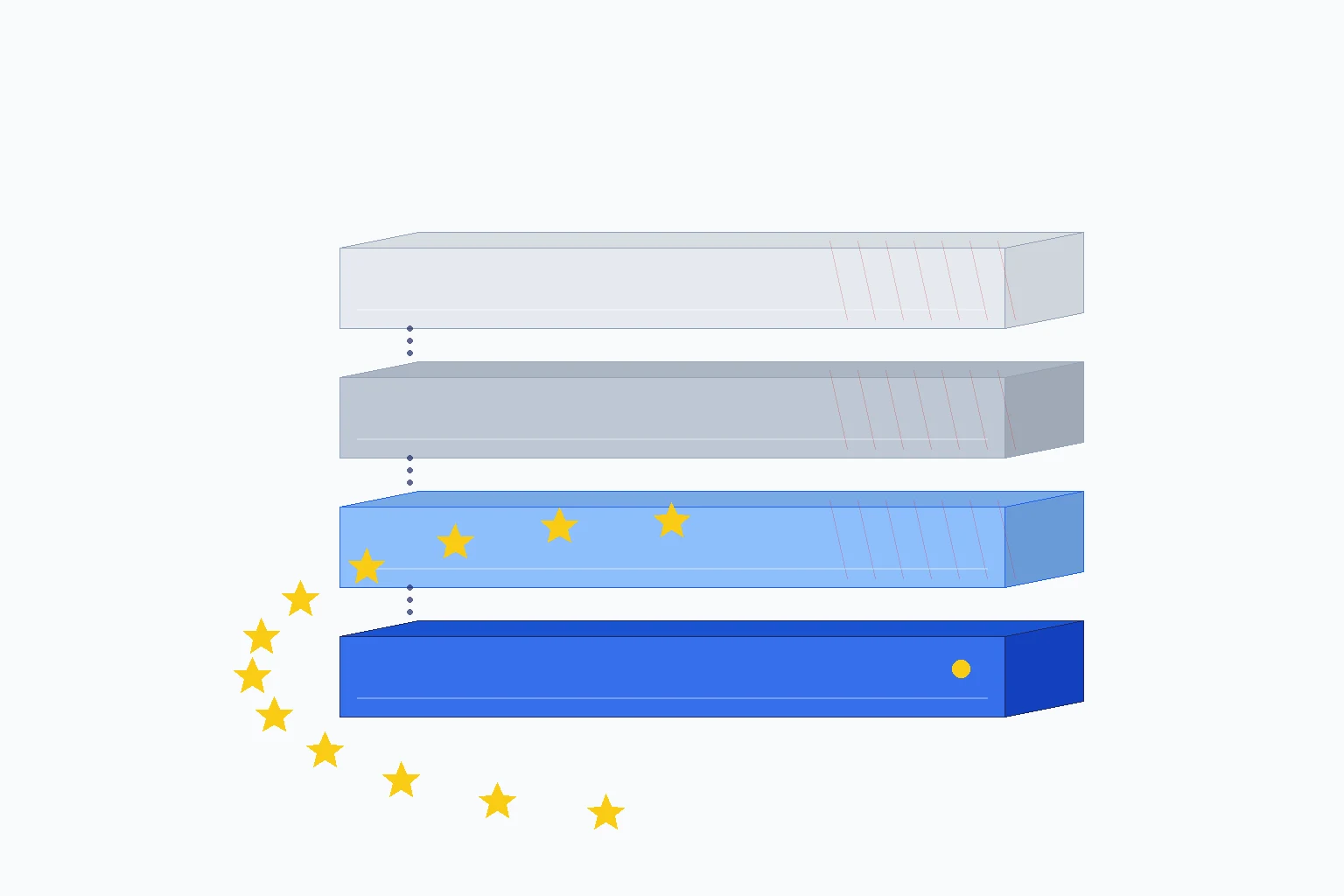

In Europe we talk about GDPR, data residency, Schrems II. But digital sovereignty is a four-layer stack, and we are losing ground on three of the four — even if something is finally moving in 2026.

Digital sovereignty: one word, four problems

I'm writing this article with Claude Code open in another window of my computer. I'm saying so up front because that's precisely the point: I build EU-sovereign AI infrastructure for a living, and I use an American coding assistant every day. Not out of laziness, not out of hypocrisy — for a reason that is the very problem I want to talk about.

A European alternative at the same level of quality, today, does not exist.

And as I write this sentence in April 2026 — while Mistral signs 830 million dollars of European debt to build a data center outside Paris and the EU launches the SEAL framework for sovereign cloud — the bigger problem stays on the table, and it isn't being talked about enough.

In the European mainstream debate, "digital sovereignty" gets reduced to a handful of concepts: GDPR, data residency, Schrems II, Data Privacy Framework, cloud sovereignty. All correct. All important. All, however, about a single layer of the problem.

Digital sovereignty is a four-layer stack:

Layer 4 — Development toolchain (coding assistants, agent frameworks, MLOps) Layer 3 — Models (LLM training, weights, fine-tuning) Layer 2 — Hardware (GPUs, fabs, custom silicon) Layer 1 — Data (storage, processing, residency, regulation)Europe plays seriously only on Layer 1. On Layers 2, 3 and 4 it carries a structural deficit — even though, in 2026 specifically, something is finally moving, and I'll come back to that. But let's go in order. Layer by layer, here's why.

Four layers, four different situations

Layer 1 — Data: Europe's only structural advantage

On the regulatory front Europe is a global leader and sets standards others follow. GDPR has been in force since 2018, the EU AI Act (Regulation 2024/1689) enters full staged application from August 2, 2026 for high-risk systems, Schrems II (CJEU ruling, 2020) rewrote the rules for transfers outside the EU, the 2023 Data Privacy Framework is already under litigation before the Court of Justice. And in 2026 the SEAL Framework (Sovereignty Effectiveness Assurance Levels) was added, with four winning consortia of the 180-million-euro Sovereign Cloud tender: Post Telecom + OVHcloud, STACKIT of the Schwarz Group, Scaleway, and Proximus + Mistral. This is the so-called "Brussels effect": Europe writes the rules, the rest of the world adapts. A real competitive asset. But the advantage isn't infinite. China has had PIPL and the Data Security Law operational since 2021. The United States is reacting with the Federal AI Action Plan (2025) and state-level initiatives like the California AI Transparency Act. And above all: regulation alone doesn't build capability. A 2026 Lunendonk data point captures the problem well: 57% of DACH-region companies have no plan B for a hyperscaler outage, and 83% consider a "kill switch" scenario realistic. Compliance is not capability. Having the right rules isn't enough if the infrastructure to enforce them is missing underneath.Layer 2 — Hardware: the most structural deficit

NVIDIA controls roughly 90% of the global AI GPU market share (source: Jon Peddie Research). 3-nanometer-and-below fabs are concentrated in Taiwan (TSMC), Korea (Samsung) and the United States (Intel). The European Court of Auditors' Special Report 12/2025 admits it explicitly: sub-7nm production capacity in Europe will not be reached on schedule. Structural dependence on non-EU fabs is now confirmed by an official auditor. Europe has ASML, the world monopoly on EUV lithography. A strategic asset, but it sells to everyone — in practice an enabler of someone else's stack, not a builder of its own. STMicroelectronics and NXP produce volume silicon on mature nodes, not frontier AI. GlobalFoundries Dresden announced a 1.1-billion investment (Project SPRINT, October 2025), but on volume technologies, not frontier. EuroHPC, with LUMI in Finland and Leonardo in Italy, is public infrastructure at academic, not industrial, scale. The EU Chips Act mobilizes 43 billion euros, but spread over years and across different use cases. The most revealing strategic data point arrived in December 2025: the U.S. administration authorized NVIDIA to sell H200 GPUs to China in exchange for 25% of the sales. NVIDIA is now officially an instrument of U.S. geopolitical leverage. Whoever buys NVIDIA — and today 90% of global AI compute is NVIDIA — depends on Washington for access, price and commercial terms. This wasn't true five years ago. It will be increasingly true over the next five.Layer 3 — Models: there's Mistral, and finally it's no longer alone

On the model layer, the United States dominates the frontier: OpenAI has raised 180 billion dollars in total according to Dealroom, Anthropic 59 billion. China competes near-parity with DeepSeek, Alibaba's Qwen, Kimi, Zhipu — a rich, fast-evolving ecosystem. Europe has Mistral. And in 2026 Mistral stopped being the exception. Series C September 2025: 1.7 billion euros at a valuation of around 11.7 billion. Then, March 30, 2026: 830 million dollars of pure debt from a European consortium (BNP Paribas, Crédit Agricole CIB, HSBC, La Banque Postale, Natixis CIB, Bpifrance) plus MUFG as the only non-European partner (Japanese, not American). Zero U.S. capital in the deal. With that money: 13,800 NVIDIA GB300 GPUs, the Bruyères-le-Châtel data center south of Paris operational in Q2 2026, capacity of 44 megawatts. Add the 1.2-billion investment announced in February 2026 in Sweden with EcoDataCenter, and a target of 200 megawatts of European compute by end of 2027. Mistral is now the third European sovereign compute provider after OVHcloud and Scaleway. Its ARR has grown from 20 to 400 million dollars in twelve months, and the stated target is 1 billion by end of 2026. Alongside Mistral, a real wave: Wayve (UK, 1.2 billion), AMI Labs (France, 1 billion), Nscale (UK, 2 billion) over the twelve months 2025-2026. Aleph Alpha pivoted to a consultancy, no longer a frontier trainer. Silo AI was acquired by AMD in July 2024. Hugging Face, U.S.-headquartered but with French founders, remains the global open-source hub. The compute scale gap, however, tells the real challenge. The Mistral Large training run is estimated at around 6,000 H100 GPUs. The GPT-4 training run is estimated at around 25,000 H100 GPUs. The 2026 frontier models are even more extreme. Europe will not win on the giant frontier models. It can win — and it is winning — on specialized vertical models and compact models (7B-70B) for regulated domains. That's exactly where it makes sense to invest, and where the window is still open.Layer 4 — Toolchain: the silent deficit

This is the least-discussed and most insidious deficit. On coding assistants, Anthropic dominates with Claude Code, alongside Cursor, GitHub Copilot, OpenAI Codex. The European alternatives are three: Continue.dev (open source, generic, technically solid but not product-grade out of the box), JetBrains (Slovenian, excellent IDE but not an AI-assistance leader), and little else. On agent frameworks: LangChain, LlamaIndex, CrewAI — all U.S. On MLOps platforms: Weights & Biases, MLflow, again U.S. The deficit is underestimated because it's invisible. People building software in Europe don't realize how much of their productivity depends on those tools until access to them gets restricted. On this layer the European open source is more vibrant — llama.cpp is Bulgarian, Hugging Face has French founders, the Hetzner ecosystem holds up well — but it's fragmented, lacks commercial players at scale, and lacks the product-grade polish of its U.S. competitors. The November 12, 2025 open letter signed by Mistral, Mozilla and Hugging Face to the EU Commission asks for exactly this: open source priority in the European sovereignty strategy, an EU Sovereign Tech Fund, easier access to compute infrastructure. The Commission opened a call for evidence on January 6, 2026. It's a first signal that the problem has entered the official European political agenda. But we're only at the beginning.The coding case: lived dependency

Let me run a concrete thought experiment. Tomorrow Anthropic restricts EU access to Claude Code for regulatory, commercial, or geopolitical reasons — not a remote scenario, given that U.S.-EU tensions over data flows have been recurring since 2015 (Schrems I, Schrems II, the Data Privacy Framework currently under litigation before the CJEU). Cursor follows the same policy. OpenAI Codex too. What do I use for my work?

The European alternative today is: Continue.dev as the IDE plugin, a local runtime like Ollama or EuLLM Engine (full disclosure: I build it), and a model like Qwen3-Coder or DeepSeek-Coder. It's a setup that works. But anyone who has tried it seriously knows the quality gap versus Claude Code is real — not 5%, not "almost the same". On a complex 200-line coding task it translates into 30-45 minutes of additional debugging. Over a month of work, that's 10-15 hours of engineering productivity lost per developer.

Let's extrapolate. Italy has roughly 500,000 software developers (Anitec-Assinform estimate, Osservatorio Competenze Digitali). According to the Stack Overflow Developer Survey 2024, more than 70% of developers use AI coding assistants in some form — the 2025 figure is plausibly higher. If tomorrow that productivity gap becomes permanent, the Italian system loses a temporary advantage that wasn't on anyone's books. The risk isn't "if" Claude were to cut off access. The risk is that today nobody is pricing that risk into the business plan of any Italian startup that depends on it.

This isn't anti-American propaganda. It's honest diagnosis. And it applies to coding the same way it applies to every other layer of the AI toolchain.

The stack as a form of power: gentle vassalage

The serious strategic debate on AI converges on one point. You find it in the Mario Draghi report "The Future of European Competitiveness" (September 2024), in Tony Blair Institute policy papers ("Sovereignty in the Age of AI", January 2026), in the analyses of the Special Competitive Studies Project founded by Eric Schmidt in 2021. The point is this: over the next ten years, the nations and blocs that control the AI stack will not exercise power the way they did in the twentieth century — territory, armies, pipelines. They will exercise it through technical standards, invisible cultural alignments, cognitive dependencies.

This is not abstract rhetoric. The most recent proof arrived in December 2025: the U.S. administration authorized NVIDIA to sell H200 GPUs to China in exchange for 25% of the sales. NVIDIA is officially an instrument of U.S. strategic leverage. Whoever buys NVIDIA — and today 90% of global AI compute is NVIDIA — depends on Washington for access, price and commercial terms. This wasn't true five years ago. It will be increasingly true over the next five.

Vassalage isn't only hardware. It's also cognitive. When an Italian lawyer asks ChatGPT to draft a clause, the clause comes back reflecting American common-law patterns before continental civil-law ones. When an Italian manager asks for leadership advice, they get Harvard Business School frameworks. When an Italian student asks for help on an essay, they get Anglo-Saxon argumentative structure. Not because anyone is actively "colonizing" — but because the model was trained there, by those people, with those moral, cultural and technical weights. The standard propagates without explicit coercion. It's more effective than coercion, and harder to perceive.

Europe doesn't risk "losing the AI war" in the nineteenth-century military sense. It risks something more subtle: becoming the organized consumer of an AI imagined elsewhere, with the cognitive, cultural and regulatory choices that flow from that. It's a form of gentle vassalage — slow, invisible, hard to perceive before it consolidates, and even harder to reverse once it has.

The advantage of recognizing it early is that we are still in a window where building pieces of our own stack is feasible. Mistral with its 830 million in European debt, the announced 20-billion EU AI Gigafactory Programme, the 1.4-gigawatt MGX-Bpifrance-NVIDIA-Mistral investment near Paris announced in March 2026, the November 2025 France-Germany summit on digital sovereignty, the December 2025 EU Council Declaration — all signs that the window is still open. But it's a window, not a permanent horizon. Ten years from now the debate may no longer be "how do we build the European stack" but "how do we negotiate, with some dignity, the status of subcontractor".

What to do: five roles, five actions

No complaining, no "the government should". Five roles, five concrete actions you can activate right now.

For founders and developers

Treat the EU-friendly stack as a conscious default, not a fashion. Build backup capability even where you currently use U.S. SaaS. Contribute to critical EU open source projects: llama.cpp, Continue.dev, Mistral, EuLLM, Hugging Face. Track the critical dependencies of your stack the way you track technical debt — with a map, priorities, and a documented mitigation plan.For European investors

Understand that Layers 3 (models) and 4 (toolchain) are still open for European founders. You don't compete on giant frontier models — you compete on specialized vertical models for regulated domains (legal AI, medical AI, financial AI) and dedicated toolchains (vertical coding assistants, agent frameworks, AI Act compliance toolkits). The Mistral debt deal of March 30, 2026 opens up a new financing option: AI infrastructure is now a bankable asset class, no longer equity-only. The next European AI unicorns will come from these spaces, not from "the European GPT" that won't arrive.For policymakers

The EU AI Act is world-leading as a regulatory framework, but on its own it doesn't build capability. Three structural interventions are needed. First: turn the SEAL Framework into binding law via the Cloud and AI Development Act, without diluting it under hyperscaler lobbying pressure. Second: respond to the Commission's call for evidence opened on January 6, 2026 with a real EU Sovereign Tech Fund for European open source AI (the explicit ask of Mistral, Mozilla and Hugging Face in their November 12, 2025 open letter). Third: scale EuroHPC from academic to industrial grade, by an order of magnitude. The window for doing these three things is narrow.For CIOs and CTOs procuring IT

Stop using "compliance" as the only vendor metric. Add "supply-chain dependency risk" as a formal line item in AI vendor evaluations. The question to ask every supplier: "If tomorrow your upstream model cuts EU access for regulatory, commercial, or geopolitical reasons, what is your documented plan B? How long to migrate? With what service degradation?". The 2026 Lunendonk data point is a concrete warning: 57% of DACH companies have no plan B for a hyperscaler outage, and 83% consider a "kill switch" scenario realistic. Don't be among that 57%.For whoever is reading this article

Sharing it isn't enough. The question to ask yourself is: in your company, in your startup, in your team, what is today the most critical and least mapped dependency on the AI stack? Start there. Mapping is the first act of sovereignty.A choice you can't see today

Europe isn't losing the AI challenge because it's technically behind. It's losing because it talks about sovereignty on only one layer while the problem is structural across four. Recognizing this isn't defeatism — it's the first step toward building something other than a regulatory bubble that won't survive the first shift in geopolitical winds.

In 2026 something is finally moving. Mistral signs 830 million in European debt. The EU launches the SEAL Framework. France and Germany converge on digital sovereignty. Mistral, Mozilla and Hugging Face co-sign an open letter on open source priority. The Tony Blair Institute publishes a serious analysis in January 2026. It has started. But it's only the start, and the gap to close is still enormous.

The debate over the next ten years comes down to this: Europe chooses to be the organized consumer of someone else's AI stack, or it tries to build pieces of its own — even small ones, even imperfect ones, even with temporary quality gaps. The difference between the two choices isn't visible today. It will be visible in 2035. And in 2035 it will be too late to change course.

Disclosure: the author is the founder of I3K Technologies and builds EuLLM, an open source vertical AI foundry for regulated EU sectors. It's one of the reasons I think about the problem in these terms, and a possible reason to read the article with informed skepticism.

Interested?

Contact us to receive a personalized quote.

All articles

Securvita S.r.l. — i3k.eu